Minimal Viable Mandelbrot

by Jeremy D. Frens

on June 19, 2016

part of the

Fractals in Elixir

series

Previously on Programming During Recess:

- The reboot — I’m writing a brand-new, totally improved program to generate fractals in Elixir.

- The output process — I use a selective

receiveto handle sequencing the output. - The process diagram — I diagrammed the stages (and processes) to generate fractals.

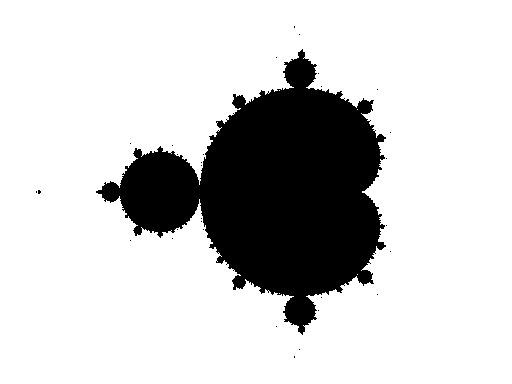

This week, I have created a minimal viable product. I have an escape-time worker to generate the Mandelbrot set. I fixed up the colorizer to paint the Mandelbrot set in black (on a white background). I have a picture.

My code is in my mandelbrot repo on Github tagged blog_2016_06_19.

Boom. Here is the thing.

It’s not too exciting except in the oh-wow-my-program-actually-generates-the-thing-I-want-it-to-generate sense. Let’s wait until I can generate the burning ship1 in some cool blue colors before we get too thrilled.

Mandelbrot worker: third verse, same as the first (and second)

Something kind of cool happened with the worker to generate the Mandelbrot set: it looks the same as the workers for generating the grid (the first verse) and for generating colors (the second verse).

defmodule Fractals.XWorker do

use GenServer

# Client

def start_link([options]) do

GenServer.start_link(__MODULE__, options, name: __MODULE__)

end

def x_action(pid, chunk) do

GenServer.cast(pid, {:x_action, chunk})

end

# Server

def init(options) do

{:ok, options}

end

def handle_cast({:x_action, {number, data}}, options) do

Task.start(fn ->

send_chunk({number, X.x_action(data, options)})

end)

{:noreply, options}

end

def send_chunk(chunk) do

NextWorker.color(NextWorker, chunk)

end

end

That’s what they all look like. Go ahead. Open them up in three browser windows next to each other:

However, I feel no need to abstract them all into a common implementation.2

- My

XWorkerabove is an adequate pseudo-code abstraction for discussion, but not perfect. (E.g.,GridWorkerdoes not receive a chunk.) - I don’t have enough worker modules to find a good abstraction.

- I’m kind of unsure how to build a good abstraction: macros, protocols, behaviours? (E.g., what’s the best way to abstract

NextWorker?) - The workers are too simple.

- The workers are not finished.

At the very least, I want to try some process pooling for each of these workers.

Performance surprise

My original Elixir program still works, and by default it uses just a single process for input, output, and all computations. While I was using it to compare outputs with my new program, I discovered something: my new multi-process program runs in the same amount of time.

In the first article in this series on the new program, I talked about how every intuition I had about dividing the program up into processes backfired on me. So to see that my new program with its processes was even close to the original program, I was really happy.

And then the new program got faster.

In the GridWorker and ColorizerWorker, I use Task.start to execute the work in a new Task. A GridWorker spawns just one Task that generates the whole grid and sends it out in chunks. The ColorizerWorker spawns a separate Task for each chunk. Those chunks run concurrently with each other and with the original ColorizerWorker.

The Task seems like a neutral, maybe good, idea for the GridWorker since it’s just one task. I was concerned, though, that the ColorizerWorker would flood the system with thousands of processes. When I implemented the EscapeTimeWorker, I did not spawn the Mandelbrot algorithm in a Task. I figured it would be just too much.

That’s when I noticed my new program was running as fast as my old program despite those Tasks.

As I was cleaning up the code, I thought that I’d at least try the Mandelbrot algorithm in a Task. And my code ran faster.

These numbers are not at all scientific, and I didn’t do anything to ensure that my laptop was nice and quiet, but I ran both programs three times on the same input file:

- original: 9.1s, 9.9s, 10.5s.

- new: 7.5s, 7.4s, 6.8s.

If you compare the median times, my new program runs 25% faster.

Explaining the performance

I’m not entirely sure why my new program wins.

My original intent for the new program was a pipeline of stages: generate the grid points, escape the grid points, color the grid points, and output the colored grid points. Each stage is kept busy concurrently with the others. As an experiment, I took all of the Task.starts out of my new version, and it slows down to about 8.5s which is still faster than the single-process original version.

So yeah for pipeline processes!

When I spawn Tasks for the escape-time algorithm and for coloring, I’m increasing the concurrency. The workers spend more of their time receiving chunks and spawning Tasks. The Tasks do the work with more concurrency within each stage.

So yeah for more concurrency!

But then why isn’t the system getting flooded with Tasks? This I don’t know. I figured this was the problem with concurrency in my old version.

I think the key is how the Tasks are spawned. My old program would chunk up the grid and then Task.start_link each chunk; then it would Task.await them all. This guarantees thousands of processes spawned at about the same time which linger around until they’re all awaited on. Then they all die.

My new program just starts Tasks and doesn’t join with them later. The task dies as soon as it sends its processed chunk to the next worker. So tasks don’t linger for very long.

So yeah for quick tasks?

But I’m unsure what this means for explicit pooling. My implicit (emergent?) pooling seems to be good enough. Maybe I could do better if I limited the number of escape-time tasks, but let the colorizers run wild. More importantly, the balance might be thrown off with other fractals like the Newton or Nova fractals or with other coloring schemes. So I’ll still implement process pools for the escape-time and colorizer stages, but I might wait on that until this spawn-Tasks-all-the-time approach plays itself out.

Next time

I’m a little unsure what to implement next. My current thinking is that what I’m calling a “worker” now may actually be more of a “dispatcher”. The spawned Tasks are the actual workers. I could make this change without changing the actual overall structure. It would then make it a bit easier to switch over to a pooling solution. I think this change will also make it easier to handle different fractal algorithms and different coloring schemes. And I’d like to get started on that sooner rather than later.